On December 13, 2024, during Nvidia's analyst day in Taiwan, CEO Jensen Huang dropped a bombshell that sent ripples through global markets. New U.S. export restrictions, finalized in late October, will prevent the company from selling its China-specific H20 AI graphics processing unit (GPU) to the world's second-largest economy. What was positioned as a compliant workaround to prior curbs is now off-limits, potentially costing Nvidia up to $12 billion or more in annual revenue—a stark reminder of how geopolitics is reshaping the AI boom.

Huang was candid: "We designed the H20 specifically for the China market to comply with U.S. regulations, but the rules changed after we built inventory." Shipments were slated for the fourth quarter of Nvidia's fiscal 2025, but the U.S. Department of Commerce's October 7 announcement expanded controls on high-bandwidth memory (HBM) chips, effectively classifying the H20 as a restricted item. Analysts from Barclays and others estimate the hit at $4-5 billion for H20 alone, but when layered with broader China datacenter exposure—previously around 13-20% of revenue—the total could exceed $10-12 billion annually.

The Evolution of U.S. Export Controls

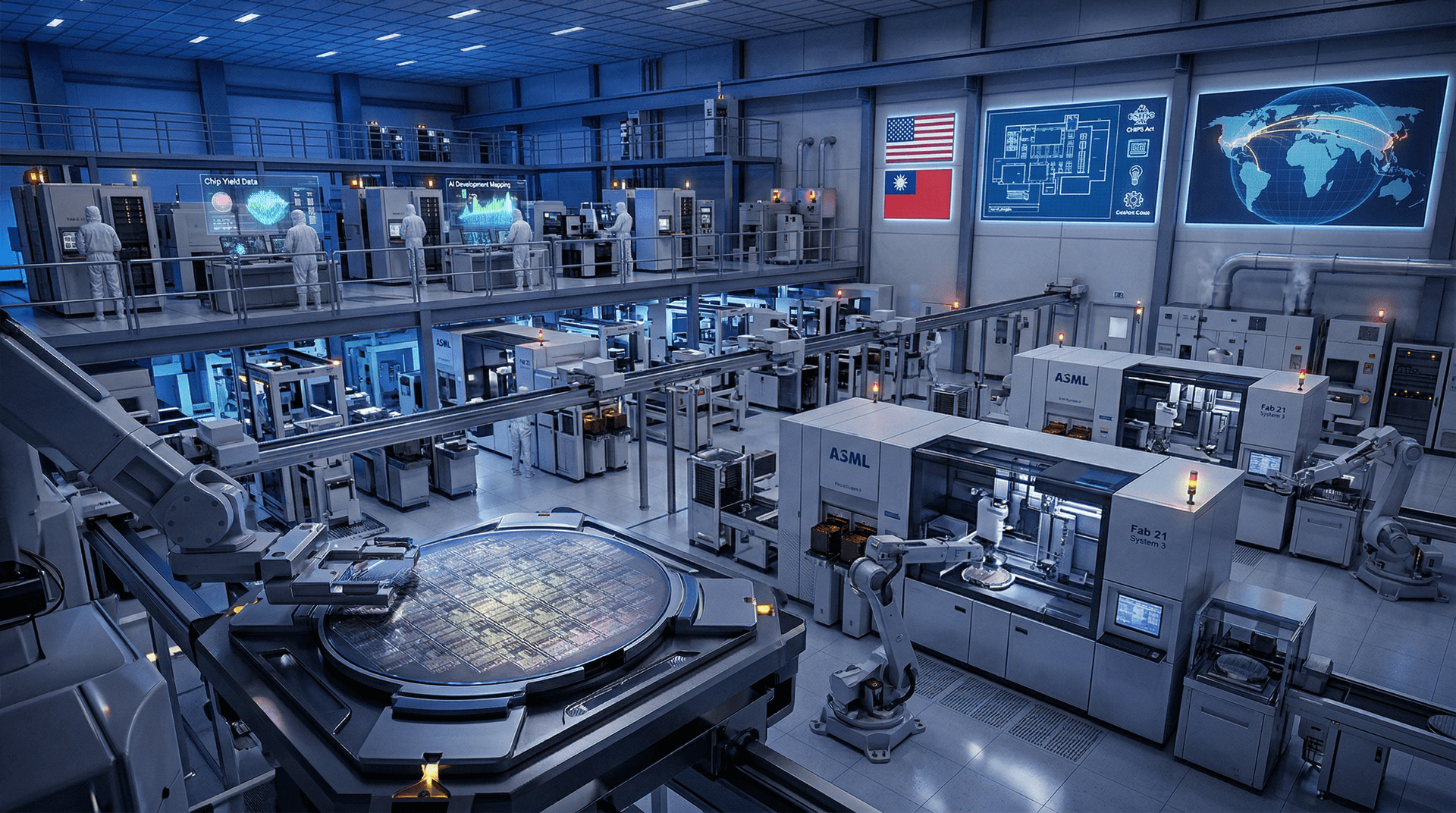

This isn't Nvidia's first rodeo with U.S.-China trade frictions. Since 2022, Washington has progressively tightened restrictions on advanced semiconductors to curb China's military AI capabilities. The Biden administration's latest salvo, building on rules from August 2023 and January 2024, targets AI accelerators with sophisticated memory tech. The H20, a downgraded version of the blockbuster H100, was Nvidia's olive branch: slower performance (48GB HBM3e vs. H100's 80-94GB HBM3e) to skirt bans while capturing China's voracious demand for AI training hardware.

Yet, the rules shifted post-design. The October update invoked the "foreign direct product rule," capturing even foreign-made components if U.S. tech is involved. China, accounting for 17% of Nvidia's Q3 fiscal 2025 revenue (down from 26% pre-crackdown), remains a linchpin. Without H20, Nvidia must pivot inventory elsewhere, but demand from U.S. hyperscalers like Microsoft and Amazon is insatiable—though not infinite.

Immediate Market Fallout

Nvidia's stock dipped nearly 3% in after-hours trading on December 13, erasing some post-earnings glow. Shares had surged 180% year-to-date on AI hype, pushing market cap above $3.3 trillion, but this revelation underscores vulnerabilities. The PHLX Semiconductor Index fell 1.5%, with peers like AMD and Broadcom feeling the heat.

Macro implications loom large. AI infrastructure spending is projected to hit $200 billion in 2025 by Grand View Research, but supply constraints could inflate costs. U.S. firms face higher prices; Chinese competitors gain breathing room. "Geopolitical risk is now priced into every AI trade," noted Wedbush analyst Daniel Ives. Nvidia's gross margins, already pressured at 75%, could compress further if China sales evaporate without offsets.

| Metric | Pre-Crackdown (FY24) | Projected FY25 Impact | |--------|-----------------------|----------------------| | China Datacenter Revenue | ~$15B | -$10-12B loss | | Total Revenue | $96B | $130B+ target at risk | | Stock Price Reaction | N/A | -2.8% Dec 13 | | Global AI CapEx | $100B | $200B forecast |

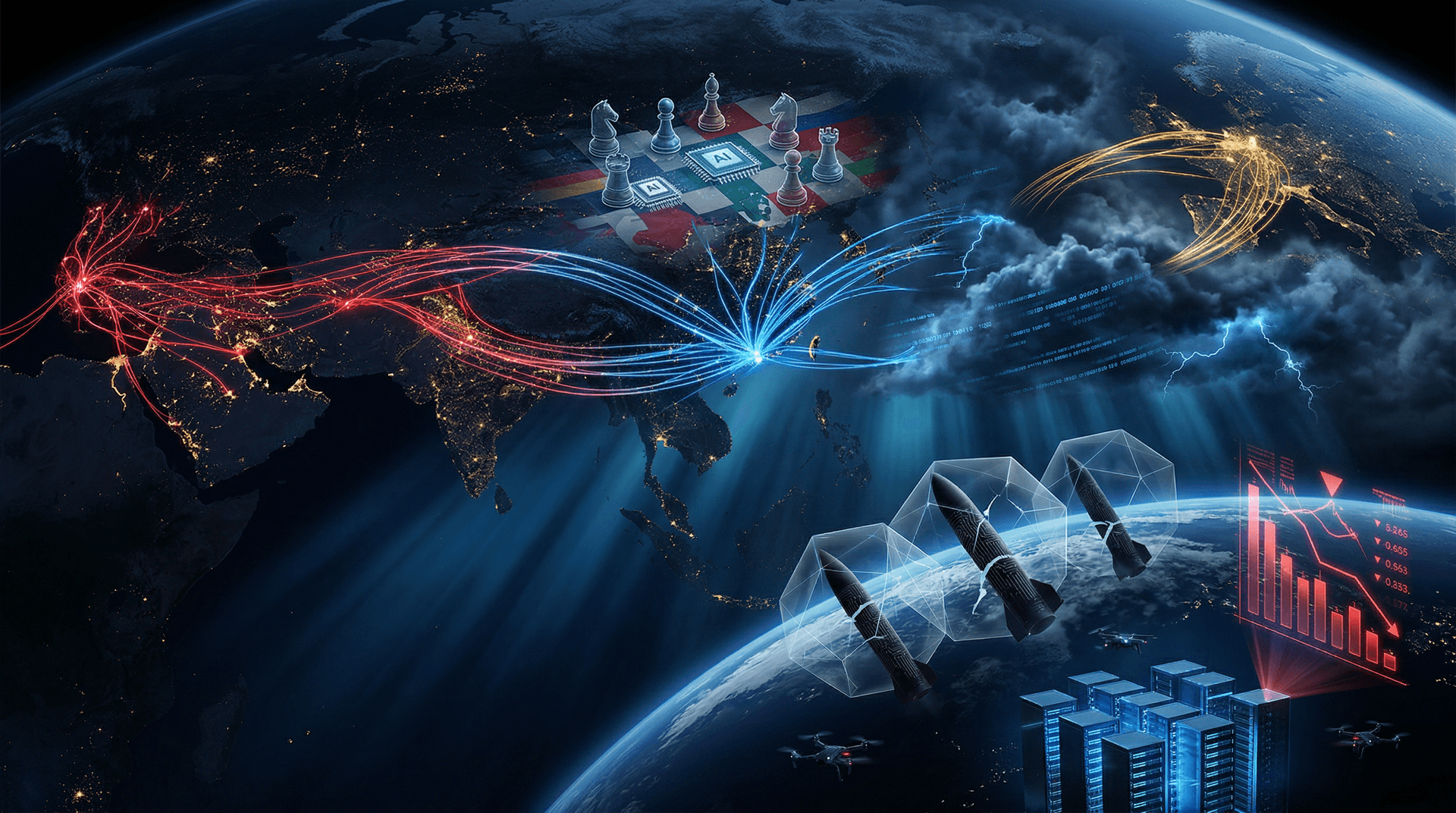

Geopolitical Chessboard: US vs. China AI Race

At its core, this is macro strategy. The U.S. views China's AI ascent—fueled by firms like Huawei, Baidu, and Alibaba—as a national security threat. Beijing's "Made in China 2025" and military-civil fusion doctrines prioritize indigenous chips. U.S. hawks, including outgoing Commerce Secretary Gina Raimondo, argue curbs buy time for American dominance.

China retaliated with its own bans on gallium and germanium exports in 2023, critical for chips. Now, Huawei's Ascend 910C, benchmarked near H100 levels, is ramping production. DeepSeek and Alibaba's Qwen models rival OpenAI's offerings on domestic hardware. President Xi Jinping's December 18 Politburo meeting emphasized AI self-reliance, signaling accelerated state subsidies—potentially $100B+ via funds like National Integrated Circuits Industry Investment.

For markets, it's a zero-sum game. U.S. AI leaders (Nvidia, TSMC) thrive short-term, but long-term fragmentation risks a bifurcated ecosystem: Western LLMs vs. Chinese alternatives. Geopolitics amplifies volatility; Trump's incoming administration vows even tougher stance, possibly invoking IEEPA for broader sanctions.

Ripple Effects Across the AI Ecosystem

Hyperscalers are scramble. Microsoft's Azure and AWS rely on Nvidia for 80%+ of AI workloads, but China ops (e.g., Azure China) need alternatives. AMD's MI300X gains, but capacity lags. Startups like Grok's xAI and Anthropic face pricier GPUs amid shortages.

Macro tailwinds persist: Goldman Sachs pegs AI-driven GDP boost at 7% over 10 years. Yet, export curbs could slow global adoption, hiking energy demands (AI data centers to consume 8% of U.S. power by 2030). Investors eye diversification—Intel's Gaudi3, Cerebras, or even Qualcomm's edge AI.

China's workaround? Smuggling via Singapore/Malaysia (BIS flagged $2B illicit H100 flows) or cloud access to U.S. models. OpenAI's API serves Chinese devs indirectly, blurring lines.

Nvidia's Playbook and Investor Outlook

Huang remains bullish: "AI is just getting started." Nvidia's Blackwell platform (B200) sold out through 2026, with gross margins rebounding to 78.5% in Q3. China pivot? Push lower-end chips or software optimizations. Long-term, sovereign AI funds in UAE, Saudi Arabia fill gaps.

Valuation stretches: P/E at 50x forward earnings. Bears cite bubble risks; bulls point to $1T datacenter TAM. Key watch: Q1 FY26 earnings Jan 2025, Trump policy signals.

Conclusion: AI's Geopolitical Reckoning

Nvidia's December 13 disclosure crystallizes the macro pivot: AI isn't just tech—it's ground zero for U.S.-China rivalry. Billions in forgone sales underscore supply chain fragility, forcing markets to grapple with nationalism over globalization. As 2024 closes, investors must balance explosive growth against escalating risks. The real winners? Those hedging both worlds.

(Word count: 912)