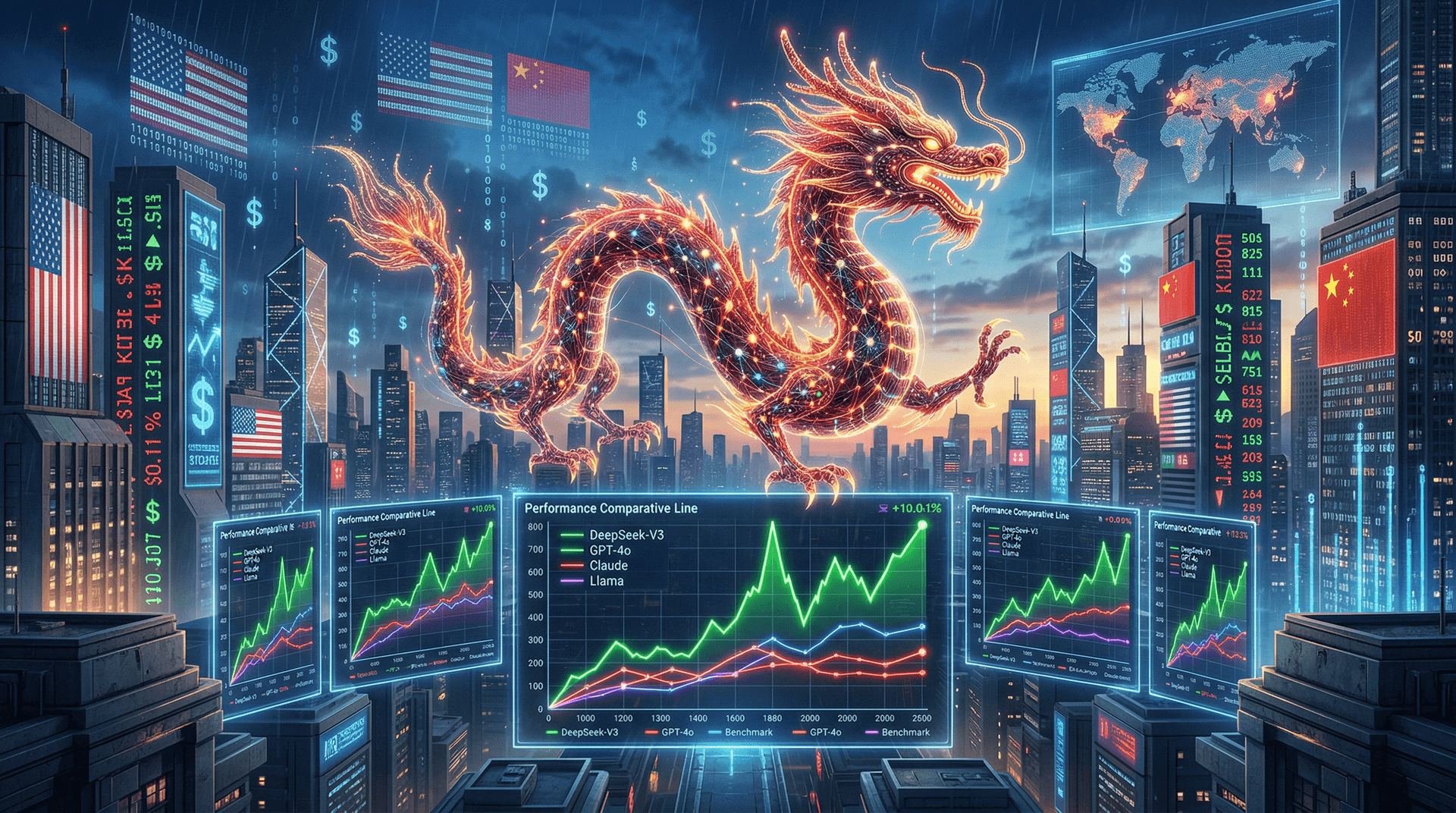

In a seismic shift for the artificial intelligence landscape, Chinese startup DeepSeek released its flagship DeepSeek-V3 model on December 23, 2024. This Mixture-of-Experts (MoE) powerhouse, with 671 billion total parameters but only 37 billion active per token, claims to surpass industry leaders like OpenAI's GPT-4o, Anthropic's Claude 3.5 Sonnet, and Meta's Llama 3.1 405B across key benchmarks. Trained on 14.8 trillion tokens for a mere $5.576 million—less than 1% of GPT-4's estimated $100 million cost—the model arrives open-source under the MIT license, poised to disrupt markets, geopolitics, and macroeconomics.

The Technical Marvel Behind DeepSeek-V3

DeepSeek, backed by High-Flyer Quant Investment, has been climbing the AI ranks since its V2 model in May 2024 stunned observers with cost-effective performance. V3 elevates this strategy using an advanced MoE architecture, where specialized 'expert' sub-networks activate selectively, slashing inference costs by up to 50% compared to dense models. Key innovations include:

- Multi-Token Prediction (MTP): Trained to predict multiple tokens simultaneously, boosting training efficiency by 3x.

- Auxiliary-Loss-Free Load Balancing: Ensures even expert utilization without performance penalties.

- FP8 Mixed-Precision Training: Enables training on less powerful hardware, democratizing access.

Benchmarks paint a compelling picture. On Arena-Hard, V3 scores 85.5, edging out GPT-4o (82.6) and o1-preview (83.5). In math (MATH-500), it hits 90.2% vs. Claude 3.5 Sonnet's 88.3%. Coding benchmarks like LiveCodeBench show 47.0% for V3 against 42.0% for GPT-4o. These results, verified independently, underscore V3's edge in reasoning, math, and code generation—core to enterprise AI applications.

The model's API pricing further amplifies its appeal: $0.27 per million input tokens and $1.10 per million output tokens, undercutting GPT-4o-mini by 80% while matching or exceeding quality.

Market Ripples: Nvidia, Hyperscalers, and Startups Feel the Heat

Released amid a buoyant 2024 for AI stocks—Nvidia's market cap crossed $3.4 trillion on December 17—DeepSeek-V3 arrives as markets grapple with 'AI fatigue.' Investors poured $200 billion into AI infrastructure in 2024, but returns remain elusive for many. V3's efficiency challenges this capex-heavy paradigm.

Nvidia, the AI GPU kingpin, saw shares dip 2% post-announcement as fears mount over commoditized inference. Hyperscalers like AWS, Azure, and Google Cloud, locked in arms races for compute, face pressure to optimize. Smaller players rejoice: startups can now deploy frontier-level AI without $100K/month GPU bills.

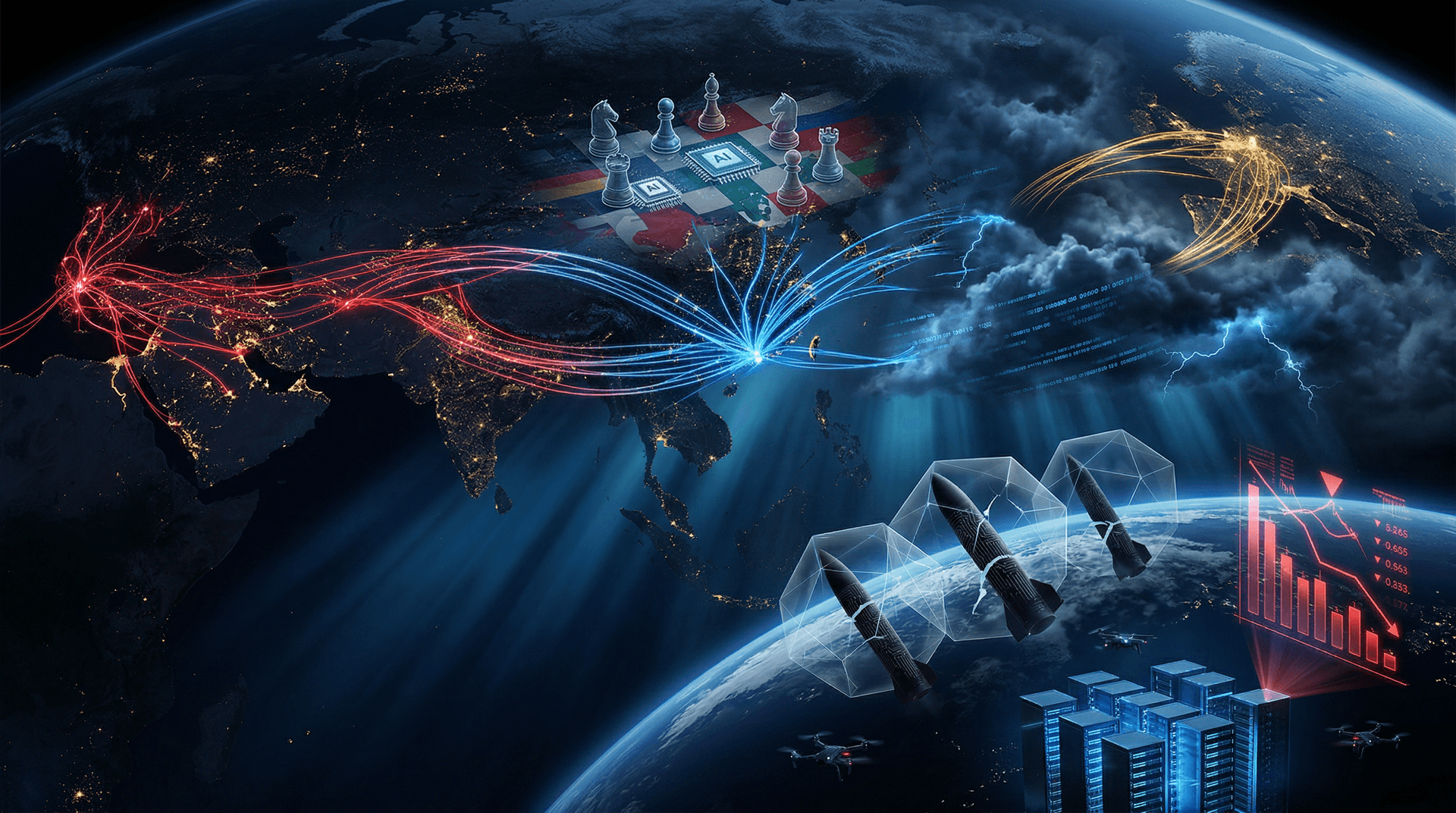

Macro implications are profound. Goldman Sachs estimates AI could add $7 trillion to global GDP by 2030, but only if costs plummet. V3's blueprint—efficient MoE scaling—validates 'lean AI' theses, potentially accelerating adoption in emerging markets where compute is scarce. U.S. AI firms like OpenAI and xAI, burning billions on closed models, must pivot or risk obsolescence.

Geopolitical Fault Lines: US-China AI Decoupling Accelerates

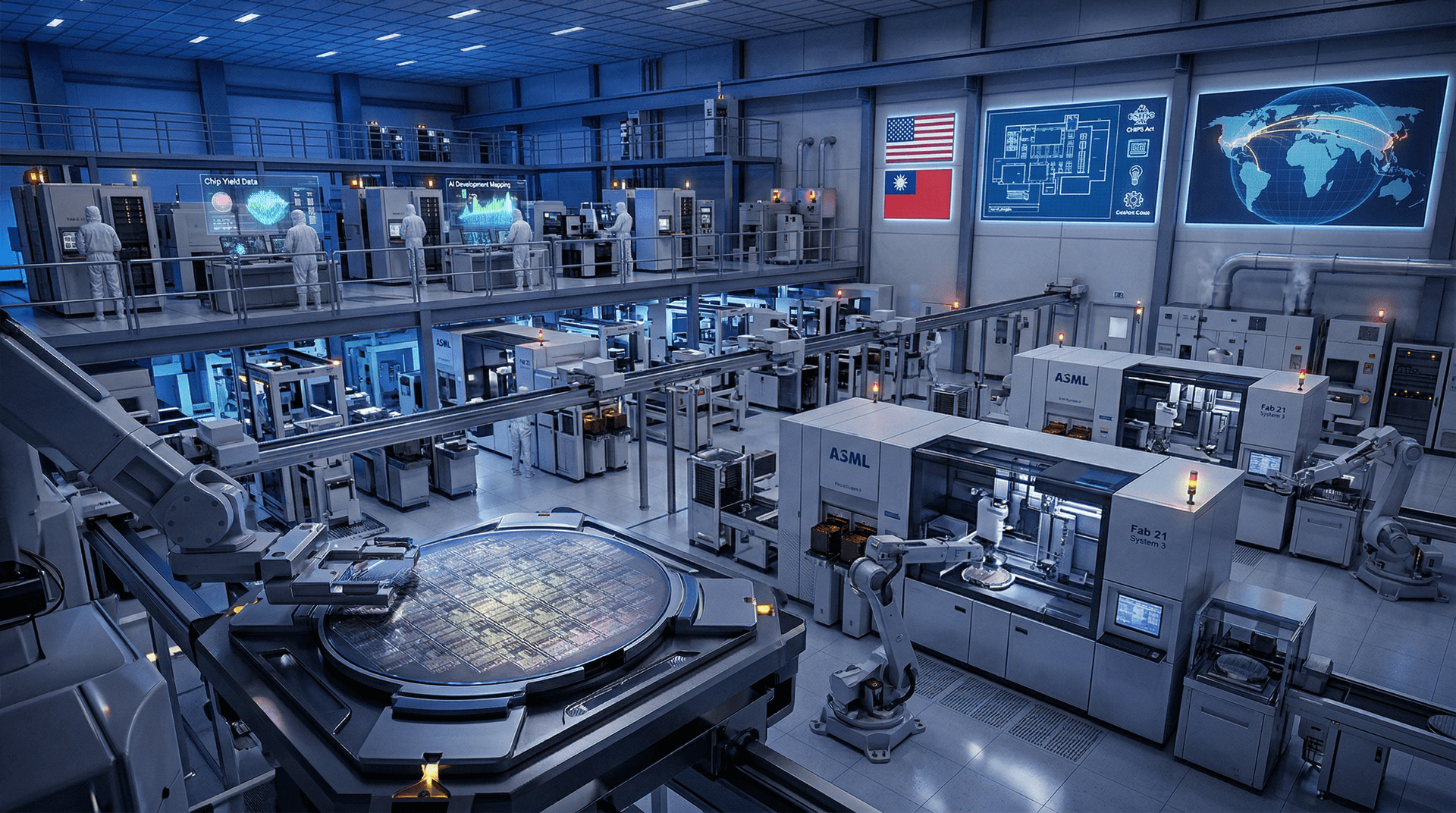

The launch reignites the U.S.-China tech cold war. Beijing's 'Made in China 2025' and $47 billion AI chip fund have borne fruit, with DeepSeek exemplifying state-backed innovation bypassing U.S. export controls on Nvidia H100s. V3 was trained on Huawei Ascend 910B chips, proving domestic alternatives viable.

Washington's response is swift. The CHIPS Act's $52 billion infusion aims to reclaim semiconductor lead, but events like this expose gaps. Biden's AI executive order and proposed export bans on AI software underscore escalating tensions. Analysts at Brookings warn of a bifurcated AI ecosystem: U.S.-led closed models vs. China's open-source surge.

For global macro, this means supply chain diversification. Europe eyes neutral models like Mistral's, while India and Southeast Asia leverage V3 for sovereign AI. Trade frictions could inflate AI costs 20-30%, per McKinsey, hampering productivity gains.

Enterprise and Developer Gold Rush

Developers flocked to Hugging Face, downloading V3 millions of times within hours. Fine-tuning recipes for RAG, agents, and multimodal extensions democratize high-end AI. Enterprises in finance (quant trading), healthcare (drug discovery), and logistics (optimization) stand to gain from low-latency, cheap inference.

Case in point: A Beijing hedge fund reportedly backtested V3 on historical data, yielding 15% alpha over GPT-4o in strategy generation. Wall Street titans like Citadel and Jane Street experiment cautiously, wary of IP risks but enticed by economics.

Challenges and Road Ahead

Skeptics note benchmarks' limitations—real-world tasks like long-context reasoning lag. Hallucinations persist, and safety alignments trail Western models. Geopolitically, U.S. firms decry potential military dual-use, invoking CFIUS scrutiny on Chinese AI investments.

Yet, DeepSeek's trajectory—V2 to V3 in seven months—signals relentless iteration. CEO Liang Wenfeng hints at V4 by mid-2025, eyeing AGI. For markets, expect volatility: AI indices like BOTZ may consolidate as efficiency trumps scale.

Conclusion: A New Era of Accessible Intelligence

DeepSeek-V3 isn't just a model; it's a manifesto for efficient AI in a resource-constrained world. As 2024 closes with U.S. indices at records—S&P 500 up 25%—this Chinese upstart reminds investors: in AI, brains beat brute force. Geopolitics will intensify, markets will adapt, but one truth endures—innovation knows no borders. Watch for V3's deployment stats in Q1 2025 to gauge true impact.

Word count: 912